AI Initiative

Greek philosophy may seem a strange starting point for an article relating to something that, to the philosophers themselves, would have seemed unfeasibly futuristic, but bear with me! The great Greek philosopher Democritus, believed by some to be the ‘father of modern science’ developed an idea of atomic theory back in 450 BC. His questioning of the material world is regarded by many as the beginnings of atomic physics. His theories regarding the nature of atoms, their physical and behavioural structure, are now a cornerstone of science – more specifically Quantum Mechanics. Like many ancient inquiries, Democritus’ withered and disappeared for 2000 years until early in the nineteenth century, a British chemist John Dalton revived the concept of an atom by including it in his writings and lectures. Later in 1932, at the height of Einstein’s infamous E=MC2, two British physicists, John Cockcroft and Ernest Walton, split the atom confirming Einstein’s theory. Only a decade later, the first-ever atomic bombs were dropped on Hiroshima and Nagasaki.

This historic, ‘atomic’ chain of events provides essential insight into our wiring as human beings. The discovery of the atom ignited huge optimism about the power of science in creating a better world. But, undeniably, and demonstrably, nuclear force in practice showed how scientific progress can be tragically bittersweet.

Fast forward to the 21st century, Artificial Intelligence (AI) is at a similar juncture to the atom mid-twentieth century; like the atom, it’s means and ends also need careful consideration.

With ongoing technological advancement, deliberation, and profound effort to make AI a part of everyday life, many nations are becoming increasingly competitive in realising the AI dream. The UK is considered a leader in AI development, primarily due to its rich computer science history (kudos Alan Turing!) – and ranked third for private investment into emerging AI in 2020 globally, following only the USA and China. (National AI Strategy, pg. 10)

It is clear we are amid another critical paradigm shift in history with enthusiasm enveloping the same feelings we shared with the atom.

In the last decade, however, pulling the thread of a realisable automated landscape has rekindled some problematic questions about scientific endeavour [1]. The rapid development, applicability, and effectiveness of any science is always coupled now with fervent discourse regarding ethics, and rightly so if we are to learn from history.

Unsurprisingly, the thorny subject of sustainability has risen to the top of the agenda fuelled by other key narratives like environmental wellbeing and climate change, but these topics have added impetus to a new wave of AI development. If we break this development down into phases, the first would be the pioneering phase, where AI was discussed counterfactually; how could AI solve world hunger, combat terminal illness and incurable diseases, or deliver peace and economic prosperity to world nations? The second phase, also known as the machine learning (ML) phase, focused on the nitty-gritty of AI; giving life to topics such as the explainability dilemma—black vs white box technology and measures of transparency, which deals with AI identity and specific sub-topics like machine recognisance.

Sustainable AI Development

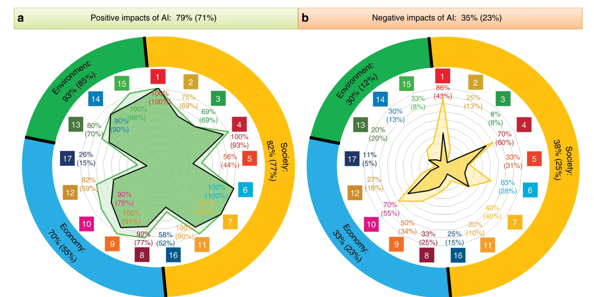

In the newest phase, the momentum surrounding AI sustainability has rapidly increased. The topic has been embraced by the UN, no less, through their use of the Sustainable Development Goals (SDGs) initiative in which 17 goals have been identified as a call to action to address the global challenges we face. A well-documented elicitation process by (Vinuesa et al., 2020) illustrated the side-effects of AI more broadly as enabling delivery of the goals (on 134 targets) and inhibiting delivery (on 59 targets) for each of the SDGs. The authors grouped the initiatives into three socio-economic brackets and highlighted the relationship between AI and all 17 goals and 169 targets recognised in the 2030 Agenda for Sustainable Development. The graph below is an insightful summary of their findings, which subtly highlights the tension (or cost/benefit) of living in an AI landscape.

The numbers inside the coloured squares signify each Sustainable Development Goal (SDG). The percentages on the top indicate the proportion of all targets potentially affected by AI and the ones in the inner circle of the figure correspond to proportions within each SDG. The results corresponding to the three main groups, namely Society, Economy, and Environment, are also included in the outer rings. The results obtained when the type of evidence is considered are shown by the inner shaded area and the values in brackets.

Ecological AI

Continuing this entry and for the sake of brevity, the focus is the ecological aspect of AI Sustainability. Living in a polluted world that is in constant flux, my perspective is that the new wave of development is about achieving an acceptable level of throughput while maintaining high levels of innovation, efficiency, and productivity.

“Hold on! What do you mean by AI and Sustainability!?” AI is the operation or process of some model/machine to achieve an intended outcome. Importantly, AI is not macroscopic in the sense that its entire lifecycle must be evaluated to determine sustainability levels. Instead, each stage of implementation and refinement should be assessed independently.

In sum, and quite trivially then, ‘sustainable’ AI refers to developing and running a machine that perpetuates a steady-state system (or homeostasis for the smarties out there): a uniform-like process that has little or no change over time. In simpler words, a point where AI development is sustainable insofar as the social benefits cancel social costs (more granularly, where the marginal use of one more unit of AI cancels the marginal cost). [2]

A famous study by (Strubell et al., 2019) highlighted the importance of thinking about sustainable outcomes. The process and requirements to train a single, isolated, deep learning natural language processing (NLP) model can potentially result in approximately 280,000 kg of CO2 emission – that’s equivalent to driving between Massachusetts and Salt Lake City fifty-six times. Given such outrageous findings, the question-begging is whether pollution from sophisticated ML models, which in the field of game development as an example seems unnecessary, is worth it really? (We’ll leave the Philosophers to work out the deontology on this one)

Empirical evidence and awkward questions convey how vital the sustainability topic really is. The aim is (or should be) striking a balance between energy saved and expended. Knowing our company specialise in Conversational AI, CX, curiosity, and all things circus-related, following our team sharing their hidden talents at a recent company get together, I embarked on a friendly internal investigation concerning our views about sustainable AI.

ContactEngine’s AI & Sustainability

In a riveting meeting with our CEO, we discussed the origins of the company and how our AI’s Sustainability was a by-product of investing in good people with heterodox mindsets. Due to our intellectual diversity and forward-looking perspective, ContactEngine made considerate-sustainable decisions without having to draft endless and monotonous plans. Our character and experience (personal included) cultivated sustainability within our product naturally. Our impact has lessened CO2 emissions for our clients logistically and has had significant positive effects on levels of efficiency, productivity, and business capacity.

The final string in our conversation was the connection between AI development and humans. My takeaway was that coming to terms with being ‘fallen creatures’ will help smooth the transition to the next paradigm. This doesn’t mean that we’re hopeful for a galactic robot-president to be our guide…recollecting our atom story again, it means our progress will inevitably uncover and harness powerful technology, however, the impact externally (be it on the environment or society as a whole) hinges on understanding and implementing its use-value before becoming concerned with its scientific or commercial value.

The role of legislation is vital to help align and bridge strategy and human endeavour with SDIs and controlled intent – it also silences scepticism a bit as there would be clear and distinct boundaries in place. Ultimately then, integrating AI in our world with little to no friction depends on clearing the path for AI development with careful but favourable legislation; improvising can carry us far but in an unintended direction. Sustainability measures are lamps unto our feet.

In another, more technical discussion with our AI Head, whose party talent was playing the electric guitar, I discovered our AI engineers are extremely disciplined. We analyse a model’s use-value based on its applicability and intended effectiveness to specific situations; models deployed are those most compatible with the circumstance, and we work hard to grasp their intended outcome before turning on the processors. In more detail:

- We evaluate the source of our compute – for instance, big cloud providers have different sustainability agendas that should be taken into consideration and different operating methods, like cooling systems, that could make the world of difference depending on the sustainability priorities you (or your organisation) have adopted.

- We raise awareness about the (potential) consequences of training medium-large models for negligible improvement, minimizing wasted computational resource, not only for the sake of lowering costs and saving time but for social benefit too…we really take pride and care about what we do!

- We’re constantly innovating!

On the final point, our conversation ended on crucial research areas that fascinate our AI team, which we’re excited to see evolution. Generally, the field of transformer-type language models is young, however, excellent progress has been made already, and with even more development on the horizon, it is likely AI might stumble on something ground-breaking without announcement or fuss.

AI Innovations – Knowledge Distillation

A further area of R&D we discussed was knowledge distillation (KD) – the idea being that larger models ‘teach’ smaller ones and close sub-optimal ML gaps. The motivating aim here is compression: a technique of deploying granular models on a smaller scale without compromising accuracy. That is, wherever larger, more sophisticated models may be lacking, instead of consuming massive amounts of energy to revaluate the model, its knowledge (or operated-upon-data, if you will) can be transferred to another model/process which is smaller but as effective. Hence, the architecture and colloquies surrounding KD usually involve terms like “teacher, student, likelihood, generalisation, regularisation”. One term that is heard to a lesser extent is “fidelity”; because the smaller model (student) lacks the computational wherewithal and richness of data compared to the larger model (teacher), seeking only to imitate not replicate and replace necessarily, some uncertainty remains, begging the question is KD effective in reality? (see Stanton et al., 2021)

To help the layperson (like me!), KD is analogous to Santa (a large model) teaching his elvish helpers (smaller models) how to deliver presents and spread festive cheer. As impressive as Santa may be, it’s still impossible for him to visit every home in the world, eat all the cookies, and neatly stack the presents under the tree…So, Santa employs little helpers to avoid breaking the hearts of many kids!

Conclusion

In sum, sustainability is a hot topic within AI. Here at ContactEngine, we use our adaptability, prudence, and inquisitive nature to reach sustainable ends daily, by keeping the clients and people we serve at the heart of our business! We still make calculated decisions; but our products’ character reflects that of the workforce who want to nurture a better and greener future. In fact, our product itself contributes to reaching a steady-state ecosystem (i.e. net zero) by reducing CO2 emissions and minimising energy expenditure. As cutting-edge topics manifest and sustainability itself rises higher on the global agenda, ContactEngine doesn’t just aim to be there every step of the way. With our unique personality, initiative, and goals, we aspire to be a few steps ahead!